Regularized Diffusion Process on Bidirectional Context for Object Retrieval

Introduction

The general goal of this work is to improve the performance of object retrieval with diffusion process, which can be taken as a procedure of similarity learning, affinity learning or re-ranking. Given an input similarity W, it aims at deriving a more discriminative similarity A. It is a generic framework which can be applied to different retrieval settings. In particular, three algorithms have been proposed, including

- Regularized Diffusion Process (RDP)

It addresses the simple retrieval setting which is commonly seen in the retrieval domain, such as those benchmark tests on the Ukbench, Holidays and Oxford5K datasets. - Asymmetric Regularized Diffusion Process (ARDP)

It addresses the cross-modal retrieval, where the query data and the database are from two different domains. - Hypergraph Regularized Diffusion Process (HRDP)

It addresses the retrieval setting where the input similarity is given in non-pairwise relationships.

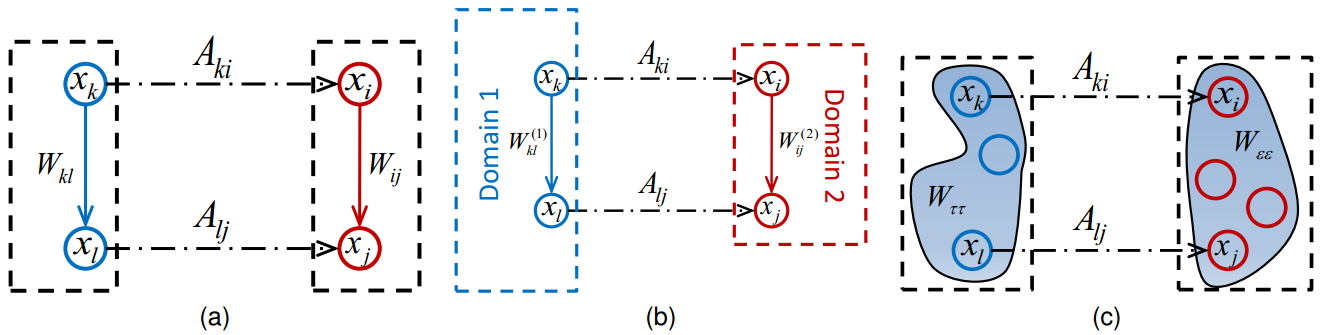

The principles of those three algorithms are illustrated in the below figure. It regularizes the relationships between four vertices on different affinity graphs, and more details can be found in the paper.

Performances

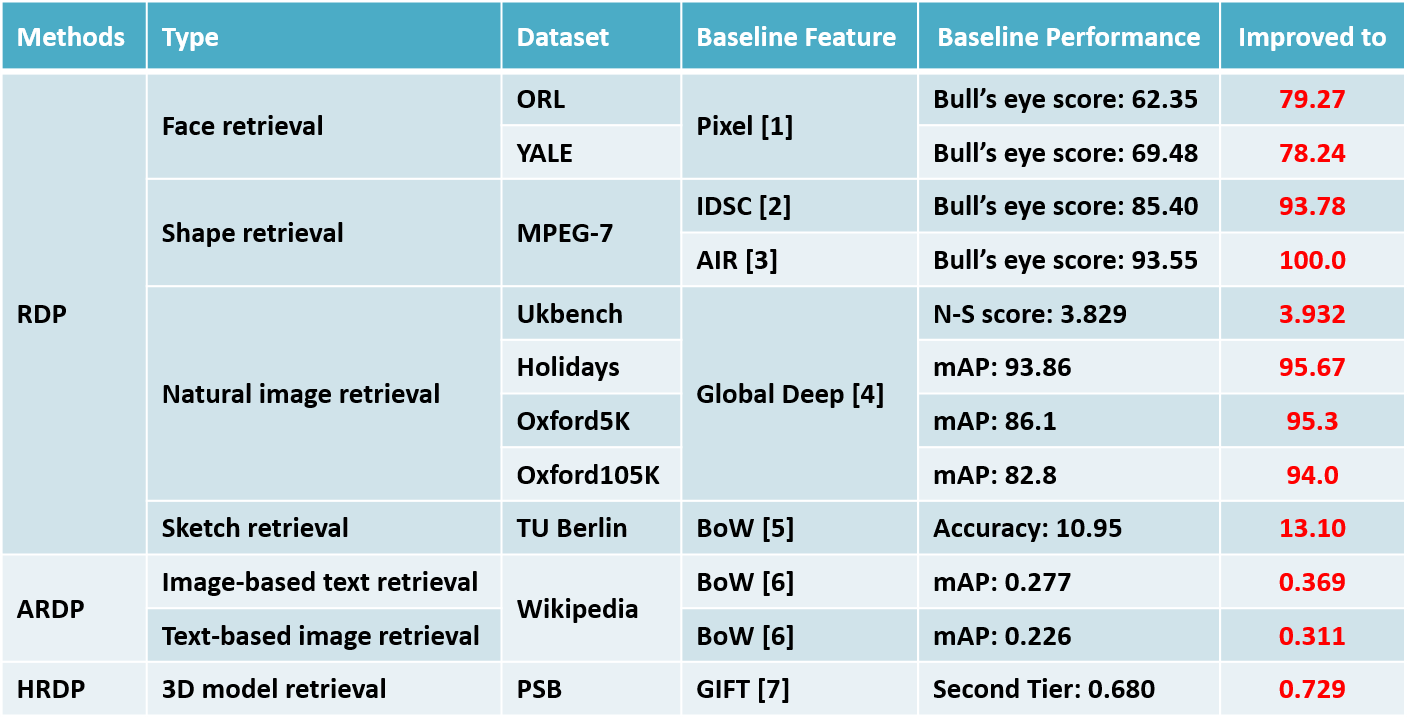

Experiments are conducted with different data modalities, different baseline features, and different retrieval settings. The results are shown in the table below, and the performances of this work are highlighted in red.

Notes:

- Most of the baseline features are publicly available. Refer to the project pages of Ref #[1-7] for downloading accordingly.

- On the Oxford5K and Oxford105K datasets, we have used an approximate version of RDP inspired by regional diffusion [8]. Refer to the paper for more details.

Codes

The codes of this work can be downloaded here. If you find the codes useful, please cite the following papers.

@article{RDP_TPAMI,

title={Regularized Diffusion Process on Bidirectional Context for Object Retrieval},

author={Bai, Song and Bai, Xiang and Tian, Qi and Latecki, Longin Jan},

journal={TPAMI},

year={2018}

}

@inproceedings{RDP_AAAI,

title={Regularized Diffusion Process for Visual Retrieval},

author={Bai, Song and Bai, Xiang and Tian, Qi and Latecki, Longin Jan},

pages={3967--3973},

booktitle={AAAI},

year={2017}

}

Note: this work can only deal with one individual input similarity. If you want to fuse multiple similarities in the framework of diffusion process, you might be interested in “Ensemble Diffusion for Retrieval” accepted by ICCV2017 and its codes.

References

[1] M. Donoser and H. Bischof, “Diffusion processes for retrieval revisited,” in CVPR, 2013.

[2] H. Ling and D. W. Jacobs, “Shape classification using the inner-distance,” TPAMI, 2007.

[3] R. Gopalan, P. Turaga, and R. Chellappa, “Articulation-invariant representation of non-planar shapes,” in ECCV, 2010.

[4] A. Gordo, J. Almazan, J. Revaud, and D. Larlus, “Deep image retrieval: Learning global representations for image search,” in ECCV, 2016.

[5] M. Eitz, J. Hays, and M. Alexa, “How do humans sketch objects?” ACM Transactions on Graphics. 2012.

[6] J. Costa Pereira, E. Coviello, G. Doyle, N. Rasiwasia, G. Lanckriet, R. Levy, and N. Vasconcelos, “On the role of correlation and abstraction in cross-modal multimedia retrieval,” TPAMI, 2014.

[7] S. Bai, X. Bai, Z. Zhou, Z. Zhang, and L. Jan Latecki, “Gift: A real-time and scalable 3d shape search engine,” in CVPR, 2016.

[8] A. Iscen, G. Tolias, Y. Avrithis, T. Furon, and O. Chum, “Efficient diffusion on region manifolds: Recovering small objects with compact CNN representations,” in CVPR, 2017.